Most business leaders assume that if an automation system passes its initial tests, it is ready to operate at scale. That assumption is expensive. Tested systems can still fail in ways that create regulatory exposure, corrupt business data, and erode the operational efficiency you built the system to achieve. Validation is a different discipline entirely. It answers a harder question: does this system reliably do what it is supposed to do, under real conditions, for its intended purpose? This article gives you a structured framework for understanding validation, applying it to AI-driven automation, and building the kind of evidence-based confidence that regulators and stakeholders actually require.

Table of Contents

- What does validation actually mean for automation systems?

- Why risk-based validation is essential in regulated industries

- Core benefits: operational efficiency, compliance, and ROI

- How to avoid common pitfalls: AI self-validation and independence

- The uncomfortable truth about automation system validation

- Looking for expert validation strategies?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Validation is risk-focused | Effective validation targets the riskiest automation functions, not just blanket system checks. |

| Regulatory compliance matters | Validated systems are essential for meeting strict regulatory and quality standards. |

| Independent verification is key | AI-driven automation requires independent checks to avoid hidden correlated errors. |

| Operational gains drive ROI | Validated automation decreases downtime and boosts return on investment by preventing costly mistakes. |

| Leadership drives validation success | Executives must champion focused validation strategies for true organizational impact. |

What does validation actually mean for automation systems?

Validation is not a synonym for testing. Testing checks whether a system produces expected outputs under controlled conditions. Validation goes further. It establishes documented evidence that a system consistently performs its intended function within defined boundaries, including edge cases, failure modes, and regulatory requirements.

For automation systems, automation system validation means verifying that the architecture you have built is fit for its specific operational purpose. That includes the tools, the workflows, the data pipelines, and the deployment logic. Each layer must be confirmed, not just assumed.

The scope of validation typically covers:

- Functional requirements: Does the system do what the business process requires?

- Risk areas: Where could failure cause the most harm, financially or operationally?

- Data integrity: Are records accurate, traceable, and protected from unauthorized alteration?

- Change control: When the system changes, does validation hold?

- Documentation: Is there an auditable record of what was tested, why, and what the results showed?

This last point matters more than most executives realize. Regulators do not simply want to see that your system works. They want to see that you can prove it worked, under what conditions, and that you have controls in place to detect when it stops working.

"Validation is used to reduce risk by focusing effort on the most critical capabilities and failure modes rather than applying uniform testing everywhere." FDA guidance, Version 2.0

That framing is important. Validation is not about testing everything equally. It is about directing rigorous scrutiny toward the functions where failure carries the greatest consequence. For AI-driven automation systems, those high-consequence areas often include decision logic, data transformation rules, and any output that feeds into compliance reporting or financial records.

Why risk-based validation is essential in regulated industries

Once you understand what validation means, the next question is how to apply it efficiently. In regulated industries, from pharmaceuticals to financial services to healthcare technology, the answer is a risk-based lifecycle approach. This is not optional. It is the standard that both regulators and enterprise stakeholders expect.

GAMP 5, the Good Automated Manufacturing Practice guide used widely in pharmaceutical and life sciences environments, frames validation as lifecycle and risk-based work that includes defining requirements, structured testing, documentation, and change control. The key insight from GAMP 5 is that validation effort should scale with system complexity and risk level. A low-risk reporting tool needs less validation rigor than an AI model that controls manufacturing parameters or flags compliance violations.

Here is how risk-based validation typically scales across system types:

| System type | Risk level | Validation depth required |

|---|---|---|

| Static reporting dashboards | Low | Basic functional testing, documentation |

| Automated data pipelines | Medium | Requirements tracing, integration testing, audit logs |

| AI-driven decision systems | High | Full lifecycle validation, independent verification, change control |

| Regulated quality system software | Critical | Regulatory submission-grade documentation, traceability matrix |

This table is not theoretical. It reflects the practical reality of what auditors and regulatory bodies look for when they review your automation infrastructure.

For executives managing reasons for automation system validation in complex environments, the practical implication is clear: you cannot apply the same validation protocol to every system in your stack. You need a tiered approach that concentrates resources on the functions where failure creates the most exposure.

Effective project requirements management for AI compliance is a foundational step here. Before you can validate a system, you need clearly documented requirements. Vague requirements produce vague validation. If your team cannot articulate exactly what the system is supposed to do, in measurable terms, validation becomes a checkbox exercise rather than a genuine risk control.

Risk-based validation is not about doing less work. It is about doing the right work in the right places.

Pro Tip: Map your automation workflows to your organization's risk register before designing your validation plan. This ensures that validation depth tracks directly to business and regulatory exposure, not just technical complexity.

Core benefits: operational efficiency, compliance, and ROI

Validation is often framed as a compliance cost. That framing is wrong, and it leads to underinvestment. When executed correctly, validation is one of the highest-return activities in your automation program.

Consider what unvalidated systems actually cost. A single data integrity failure in a regulated environment can trigger a warning letter, a product recall, or a consent decree. In financial services, an unvalidated automated decision system can produce systematic errors that affect thousands of transactions before anyone notices. The cost of remediation, including legal exposure, operational disruption, and reputational damage, consistently exceeds the cost of proper validation by a significant margin.

The FDA guidance is explicit: validation requirements extend to off-the-shelf and automated equipment and quality-system software, and validation must show accuracy, reliability, consistent intended performance, and the ability to discern invalid or altered records. This applies whether you built the system internally or purchased it from a vendor. The responsibility for validation sits with the organization deploying the system, not the vendor who sold it.

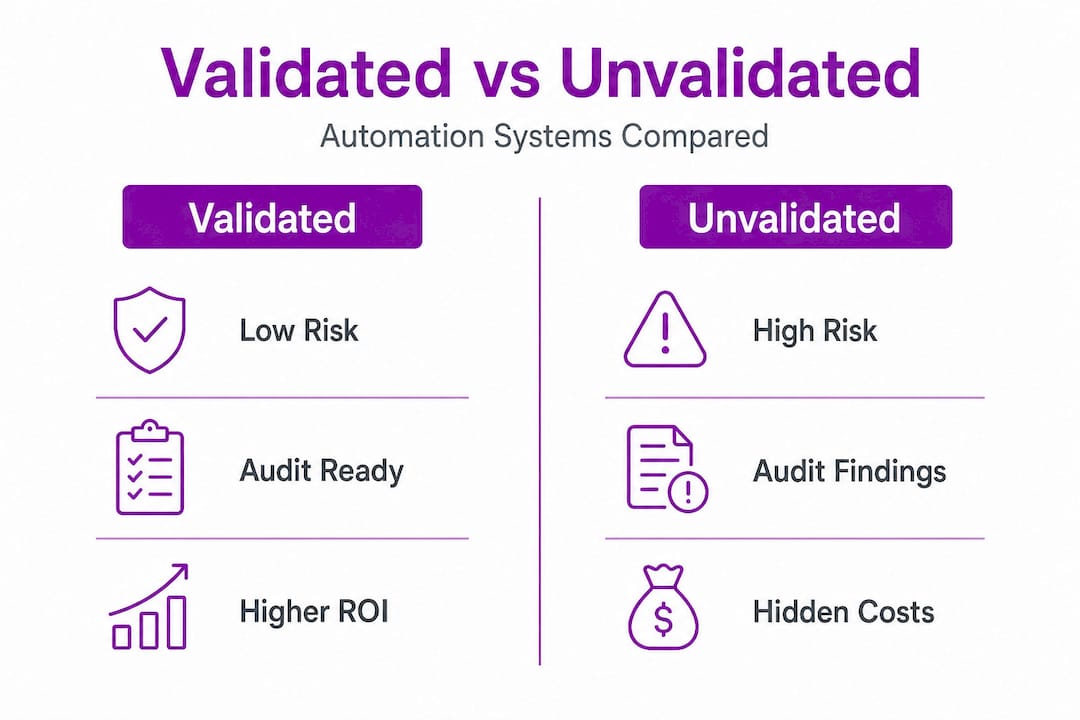

Here is a direct comparison of validated versus unvalidated automation environments:

| Dimension | Unvalidated automation | Validated automation |

|---|---|---|

| Regulatory risk | High, audit findings likely | Controlled, documented evidence available |

| Data integrity | Uncertain, hard to trace | Verified, auditable records |

| Downtime from failures | Reactive, unpredictable | Proactive detection, faster recovery |

| Scalability | Risky, errors compound at scale | Structured growth with controlled change |

| ROI timeline | Eroded by rework and penalties | Protected and extended by reliability |

The automation platform benefits that matter most to executives are not just speed and efficiency gains. They are the risk-adjusted returns that come from systems you can trust, audit, and scale without fear of hidden failure modes.

Key operational benefits of validated automation include:

- Reduced unplanned downtime because failure modes are identified and controlled before deployment

- Faster audit response because documentation is structured and accessible

- Higher data confidence because records are verified and traceable

- Lower rework costs because errors are caught at the validation stage, not after they propagate through production

- Scalable growth because validated systems have defined change control processes that prevent regression

For a practical reference on automation techniques for reliability, the consistent theme across high-performing automation environments is that reliability is engineered in, not discovered after the fact. Validation is the engineering discipline that makes reliability repeatable.

The ROI case is straightforward. Organizations that invest in structured validation spend more upfront but spend dramatically less on remediation, regulatory response, and system failures over the lifecycle of the automation. That is not a soft benefit. It is a measurable financial outcome.

How to avoid common pitfalls: AI self-validation and independence

AI-driven automation introduces a specific validation challenge that traditional software validation frameworks were not designed to address. The problem is self-validation, and it is more common than most teams realize.

When an AI system is used to check its own outputs, or when a single model is used to both generate and verify results, the errors in the system are correlated. The same blind spots that cause the model to produce a wrong answer also cause it to fail to detect that the answer is wrong. This is not a theoretical concern. It is a structural flaw in how many AI automation systems are currently validated.

As research on AI self-validation confirms, validation should directly address this nuance: self-validation or single-model checks can be misleading because the same system may be correlated in its errors, and architecture should separate roles and use independent verification where feasible.

For automated system verification in AI-driven environments, the practical solution involves architectural separation. Here is a structured approach:

- Separate the generation layer from the verification layer. Do not use the same model or the same data pipeline to both produce outputs and check them.

- Use independent data sets for validation. Validation data must be held out from training and fine-tuning processes to ensure genuine independence.

- Implement role separation in your team. The team that builds the system should not be the only team that validates it. Independent review catches assumptions that internal teams normalize.

- Benchmark against external standards. Where industry benchmarks or regulatory reference datasets exist, use them. Internal benchmarks alone are insufficient for high-risk systems.

- Document the independence of your verification process. Auditors and regulators will ask how you ensured that your validation was not circular. Have a clear, documented answer.

For context on how independent system validation for trading platforms and other high-stakes automation environments handle this, the consistent pattern is architectural separation enforced at the design stage, not added as an afterthought.

Pro Tip: When designing AI automation validation, treat your verification layer as a separate system with its own requirements, its own test data, and its own documentation trail. This structural discipline is what separates genuine validation from a compliance performance.

The uncomfortable truth about automation system validation

Here is what we see consistently across enterprises that have invested in automation: most of them have tested their systems. Very few have actually validated them. The gap between those two states is where regulatory risk lives, where operational failures originate, and where ROI gets quietly destroyed.

The conventional wisdom in enterprise automation is that rigorous testing equals validation. It does not. Testing is necessary but not sufficient. Validation requires documented evidence of fitness for purpose, risk-based focus on critical functions, and independent verification that the system performs as intended under real-world conditions. Most enterprise testing programs do not meet that standard.

The deeper problem is that executive leaders often do not know the difference until something goes wrong. A failed audit, a data integrity incident, or a systematic error in an AI-driven workflow forces the organization to confront the gap between what they thought they had and what they actually built.

The right response is not to validate everything exhaustively. That approach is as flawed as validating nothing. As the FDA guidance makes clear, validation should focus effort on the most critical capabilities and failure modes rather than applying uniform testing everywhere. The leadership discipline here is prioritization, not comprehensiveness.

We believe the most effective executive posture is this: treat validation as a risk management tool, not a compliance ritual. Ask where failure would hurt most. Direct your validation resources there. Build the documentation that proves your system is fit for its purpose in those specific areas. Then maintain that evidence as the system evolves.

That is what real validation looks like. It is focused, structured, and proportionate to actual risk. It is also the foundation on which scalable, trustworthy automation is built.

Looking for expert validation strategies?

If this article clarified the gap between testing and genuine validation in your automation environment, the next step is building the infrastructure to close it. At Starks Global Group, we design and document automation architectures with validation built into every layer, from tool selection through deployment logic and ongoing change control.

Our platform gives you access to verified automation blueprints, structured workflow documentation, and the layered infrastructure frameworks that regulated and high-performance enterprises actually need. Whether you are starting a new automation program or strengthening an existing one, our validation solutions for automation are built to meet real-world compliance and operational standards. Explore our platform and see how structured validation becomes a competitive advantage, not just a regulatory obligation.

Frequently asked questions

What is the difference between testing and validation for automation systems?

Testing checks if the system works as expected under defined conditions, while validation proves it meets its intended use and compliance standards across critical functions. As FDA guidance establishes, validation focuses on the most critical capabilities and failure modes, which goes well beyond standard testing scope.

How do risk-based validation strategies improve operational efficiency?

By concentrating validation effort on high-impact functions, organizations reduce wasted resources and catch the failures that actually matter before they reach production. GAMP 5 frames this lifecycle and risk-based approach as the standard for complex automated systems, ensuring documentation and change control scale with actual risk.

Why is independent verification important for AI-driven automation?

AI systems often produce correlated errors, meaning the same model that generates a flawed output may also fail to detect it. Research on self-validating AI confirms that architectural separation and independent verification are essential to ensure real-world reliability in AI-driven environments.

Do off-the-shelf automation systems require validation?

Yes. Regulatory standards make clear that off-the-shelf systems must be validated for intended use, accuracy, reliability, and the ability to discern invalid or altered records, regardless of the vendor's own quality claims. The deploying organization carries the validation responsibility.